Data First: The Principle Most Orgs Skip

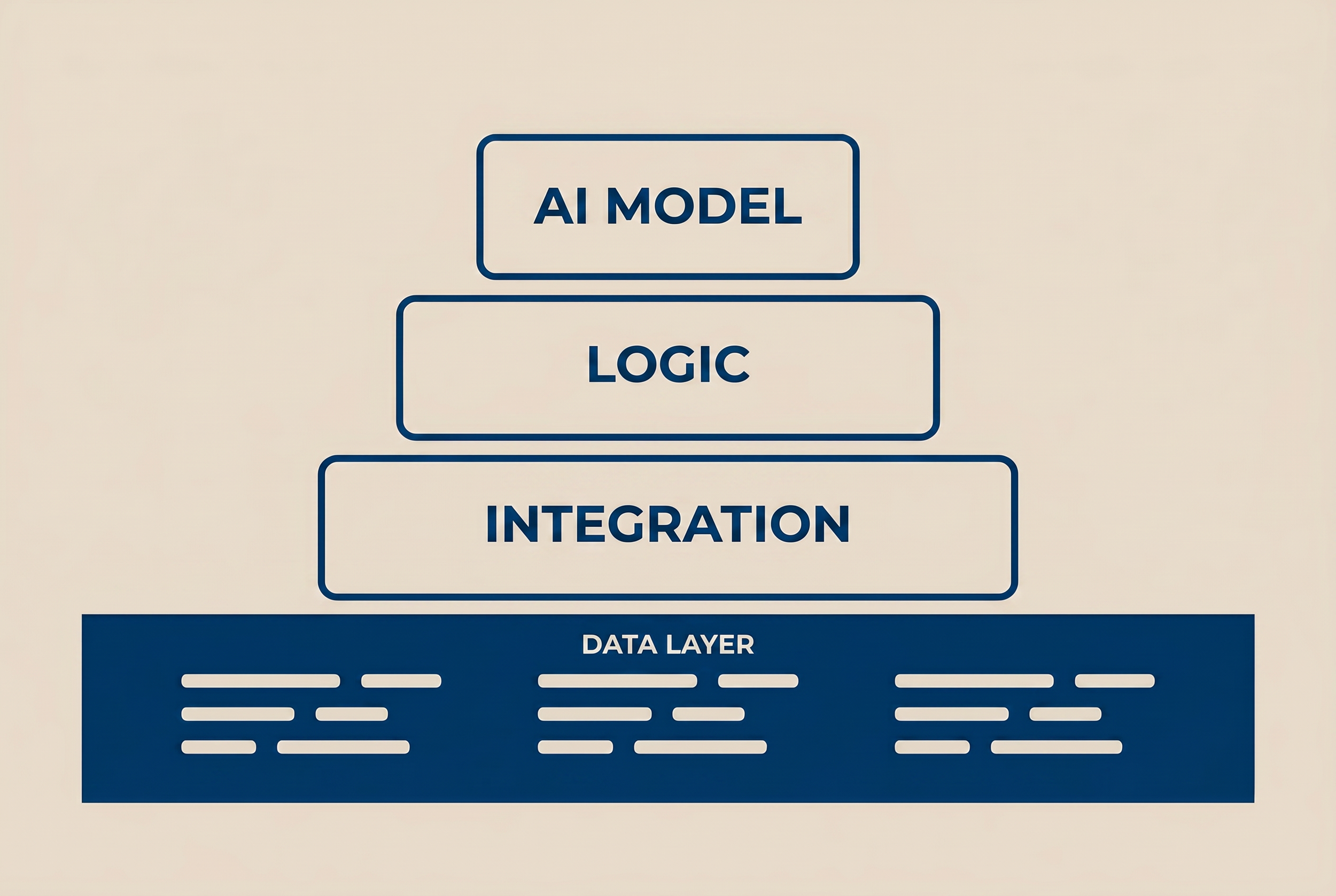

CCC's Data First principle illustrated as a four-layer stack. The Data Layer sits at the foundation in navy with structured data icons. Integration, Logic, and AI Model layers build upward on beige. The visual reinforces the article's core argument: AI is the top of the stack, not the starting point. Every layer above depends on the quality of the data beneath it.

I got a call from a nonprofit ED on a Tuesday morning. She was crying. Their new AI agent had sent a fundraising email to a donor's widow. The donor had been dead for two years. The record was still active.

Her first question: "How did this happen?"

My first question: "Did anyone audit the data before you turned it on?"

She did not have an answer.

That is not a criticism. Most orgs I work with do not have an answer to that question. The question itself is not part of the deployment conversation. The deployment conversation is about features, vendors, and timelines. The data quality conversation is treated as an IT ops task, not a deployment prerequisite.

This is the gap that the CCC AI Center of Excellence's first guiding principle addresses.

The Principle

Data First. No AI feature is activated until data quality is assessed. Clean data is a prerequisite, not a parallel workstream.

That sentence has done real work in client engagements. It is the difference between a 90-day rollout that produces 30% improvement and a 90-day rollout that produces an incident report.

The principle reflects what AI actually does inside a Salesforce org. AI does not improve bad data. AI amplifies bad data. An agent reading a Contact record with three different phone numbers, two different addresses, and a "preferred name" field that says "DO NOT CONTACT" is going to generate output that reflects all of that confusion. The output looks confident because the model is confident. The output is wrong because the input was wrong.

What "Data Quality" Actually Means

In CCC engagements, Data Quality is not a vibe. It is a 10-metric audit with a numerical baseline.

Here are the 10 metrics. Each is scored 0-10. Sum out of 100. I do not start AI work below 70.

Total Contact records vs. unique email rate

Phone number format consistency (US vs. international vs. blank)

State field standardization (state codes vs. full names vs. blank)

Duplicate ratio on Account name (case-insensitive)

Records with last modified date older than 24 months

Records owned by inactive users

Required field completion rate on Lead

Email bounce flag distribution

Records with no related activity in last 12 months

Sharing model exception count (manual shares, dev permissions)

Two hours in Developer Console. Costs zero. Produces a number you can show a board.

The Pattern Across 30 Assessments

Median score: 58.

That is not a typo. Most Salesforce orgs run AI features on top of a data quality baseline that is below the threshold I would consider safe for advisory work.

The shape of the failure is consistent. Categories 1 and 2 (uniqueness and format) are the most common drag. Phone formats range from "(555) 123-4567" to "5551234567" to "+1 555 123 4567" to "ext. 4567" to blank. State fields contain "California," "CA," "Calif.," "Cal," and lowercase variants. Duplicate ratios on Contact records sit between 8% and 22%.

These are not catastrophic problems for a human salesperson typing notes. A human reads the record, makes a judgment, moves on.

Same record fed to an agent that does not know your org's history? The agent treats every variant as a discrete fact. It writes follow-up sequences to three versions of the same person. It triggers Flows that never should have fired. It populates a Data Cloud activation with garbage upstream.

What the Fixes Look Like

The fixes are not exciting. That is the point.

Phone format standardization. A Flow on the Contact and Lead objects that runs on Before Save, normalizes formats to a single pattern (typically E.164 for international compatibility), strips extensions to a separate field. Three hours of work. Affects 100% of new records and any updated existing records. Score on metric 2 goes from 4 to 9 within a week.

State field standardization. Validation rule that enforces ISO state codes. Picklist field replaces text field. Existing records cleaned via Data Loader using a state mapping table. One day of work. Score on metric 3 goes from 5 to 10.

Duplicate Rules with Block action. Most orgs run Duplicate Rules on Allow with Alert. Users see the alert, ignore it, save the duplicate. Switch to Block on Contact, Lead, and Account. Configure Matching Rules to use email plus name fuzzy match. Two hours. Score on metric 4 goes from 5 to 8 immediately, then to 9 after 30 days as users adapt.

Inactive user record reassignment. SOQL query identifies records owned by deactivated users. Reassign in bulk to a service account or active owner. Half a day. Score on metric 6 goes from 3 to 9.

That is four fixes. Score moves from 58 to 78. Below the threshold to above it. Now we can talk about Agentforce.

Why This Cannot Be Parallel Work

Most clients want to run data quality work in parallel with AI deployment. The argument is reasonable: "We're already paying for the engagement, let's get value while the cleanup happens."

The problem is sequence. Agents do not wait. An Agentforce pilot turned on against bad data starts producing bad outputs on day one. Those outputs go into the Decision Log, into reporting, into stakeholder dashboards. By the time the data cleanup catches up six weeks later, the organization has six weeks of bad-output evidence to argue about. The pilot is judged on its early output. The early output was always going to be bad. The pilot fails not because the AI was wrong but because the foundation was wrong.

Sequence matters. Data first, then deployment. Not "data while deployment."

This is the operational meaning of the principle. It is not a slogan. It is a sequencing constraint that protects the engagement from a predictable failure mode.

What to Do This Week

If you are reading this and your org has an Agentforce pilot scheduled in the next 90 days, run the 10-metric audit before the pilot starts. Two hours. Free. Honest answer.

If you score below 70, you have time but not unlimited time. The fixes above are the fastest path to a usable baseline. Most can be done internally if your admin team has Flow and Data Loader experience.

If you score above 70, you are in better shape than most. The CoE engagements then focus on the specific metric where you are weakest, not on rebuilding everything.

Either way, the order is the same. Data first. Then everything else.

The next article in this series covers Principle 2: Human Oversight. Publishing Mon May 11.