Human Oversight: The Difference Between AI and Autopilot

"Human-in-the-loop" appears on every AI vendor pitch deck I have ever seen.

Almost none of those vendors can define what it means in practice.

I have asked the question in maybe forty vendor calls in the last year. The answers fall into three buckets. The first bucket is silence followed by "we have human review built in" with no further detail. The second is a vague reference to a confidence threshold below which a human is consulted. The third, rarest and most useful, is a specific named role with documented review criteria and an escalation path.

The first two buckets are slides. The third is a control.

This article is about the difference, and about how to build the third one inside a Salesforce org.

The Principle

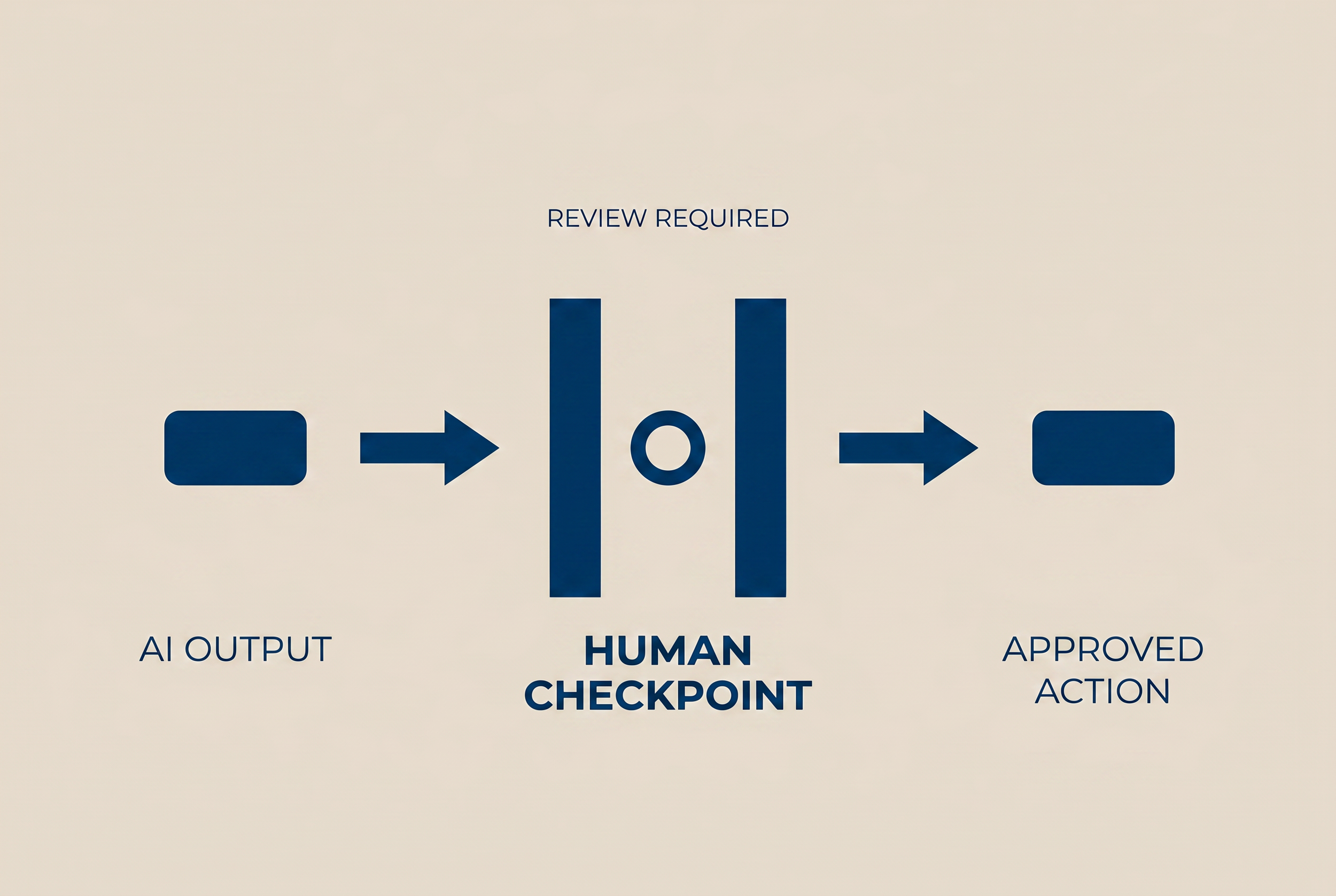

Human Oversight. Every AI-assisted decision has a documented human review gate. Automated actions are bounded by explicit scope limits.

That sentence is the second guiding principle of the CCC AI Center of Excellence. It is the operating answer to the question "what does responsible AI deployment look like in practice?"

The principle has four parts. Each one matters.

Documented. Not "we have a process." Not "the team knows what to do." A written document, version-controlled, that any new hire can read on day one and understand who reviews what.

Human review gate. A specific person, named by role, with the authority to approve, reject, or modify the AI output before it acts. Not a Slack channel. Not "the team." A role with a name on the org chart.

Bounded by explicit scope limits. The AI is allowed to do these things. The AI is not allowed to do those things. The boundary is enforced at the platform level, not at the policy level. Permission Sets, Trust Layer config, Topic Classification.

If any of those four parts are missing, "human-in-the-loop" is a slide, not a control.

The Three Tiers

Not every AI decision needs the same level of oversight. Trying to apply the same gate to every output is a fast way to kill adoption while still missing the high-risk decisions.

CCC engagements use a three-tier oversight model. The tier is set during the Design Review checkpoint, before the AI feature is activated.

Tier 1 (Read-only and informational). AI summaries of existing records, suggested next-best-action lists for human review, draft email content. The human reads, decides, acts. AI never modifies data without human action. Oversight is automatic at the action layer (the human has to click). Documentation is light: a one-paragraph use case description and a named owner.

Tier 2 (Suggested action with confirmation gate). AI proposes a record update, a Flow trigger, an external callout. The human sees a confirmation dialog. The human approves or rejects. The AI executes only on approval. Oversight is enforced at the platform layer via a custom confirmation pattern in Lightning. Documentation includes the use case, the confirmation criteria, the approver role, and the escalation path.

Tier 3 (Autonomous action with audit and kill switch). AI executes on its own, but every action is logged in the AI Decision Log. A named human owner reviews the log on a documented cadence (daily, weekly, depending on volume). A kill switch exists at the Permission Set level: revoke the integration user's permissions, agent stops. Documentation includes everything from Tier 2 plus the audit cadence, the kill switch procedure, and a 30-day post-launch governance review.

Most pilot use cases I see should be Tier 1 or Tier 2. Vendors push for Tier 3 because that is what their demo shows. The CCC default is to start at the lowest viable tier and earn the right to move up.

What "Bounded Scope" Looks Like in Salesforce

Scope limits at the policy level are insufficient. "The agent should only update Contact records" written in a Word document does not stop an agent from running an UPDATE on Account records if the integration user has permission.

The boundary has to live in the platform.

Permission Set. Create an AI-specific Permission Set assigned only to the integration user. Grant CRUD access only to the objects in scope. Field-Level Security restricts which fields the agent can read or write.

Trust Layer. Configure data masking for PII fields the agent should not see in logs. Toxicity monitoring on agent outputs. Topic Classification limits the agent to approved subject areas.

Validation Rules. Fire on API insert, not just UI insert. Catch agent attempts to create records that violate business rules.

Flow Entry Criteria. Account for agent context using $Api.Session_ID or integration user identity. Some Flows should run for humans but not for agents. Some should run for agents but not for humans. The entry criteria enforces the difference.

These four mechanisms are the structural enforcement of the human oversight principle. Without them, the principle is aspirational.

The Kill Switch

Every Tier 3 deployment needs a documented kill switch.

The kill switch is not the same as turning off Agentforce. Agentforce off is a vendor-level control. The CCC kill switch is org-level: revoke the integration user's Permission Set assignment. Agent loses access immediately. Other AI features in the org continue working. The action is reversible, surgical, and does not require a vendor support call.

The kill switch procedure is one page. It documents who has authority to fire it, what triggers warrant firing it, the exact Setup path (Setup > Permission Set Assignments > integration user > Remove), the post-firing audit steps, and the conditions under which the agent can be reactivated.

Every client engagement that includes a Tier 3 deployment includes a kill switch dry run before go-live. We fire it in sandbox. We confirm the agent stops. We confirm reactivation works. The runbook is signed off by the named owner before the agent is allowed to operate in production.

This is the operational difference between AI and autopilot. Autopilot has no kill switch. AI has one, documented, tested, and never used in anger because the rest of the controls held.

Where This Connects

Human Oversight is the second of six guiding principles. The first (Data First) governs whether the AI is operating against trustworthy input. The second (Human Oversight) governs whether the AI's actions are bounded and auditable. The principles compound. An AI deployment with bad data and good oversight is still a problem. An AI deployment with good data and bad oversight is a different problem. Both have to be in place.

The next article in this series covers Principle 3: Transparency. Publishing Wed May 13.