Transparency: Writing AI Governance Docs Four Audiences Will Actually Read

A federal program manager called me last fall. She had a 47-page AI governance policy from her vendor. Her board meeting was in nine days. She had been told to "summarize this for executives."

She had read the document four times. She still could not describe what it committed her agency to do.

I read it that night. Same experience. The document was technically complete. It cited NIST. It cited OMB M-25-21. It defined fourteen roles and twenty-two control objectives. It did not answer the only question her board would ask: "What happens if the AI is wrong?"

That document was written for an audience that does not exist. It was too operational for executives, too philosophical for engineers, too compliance-heavy for project managers, and too jargon-soaked for the people who would actually use the system.

This is what the third guiding principle of the CCC AI Center of Excellence addresses.

The Principle

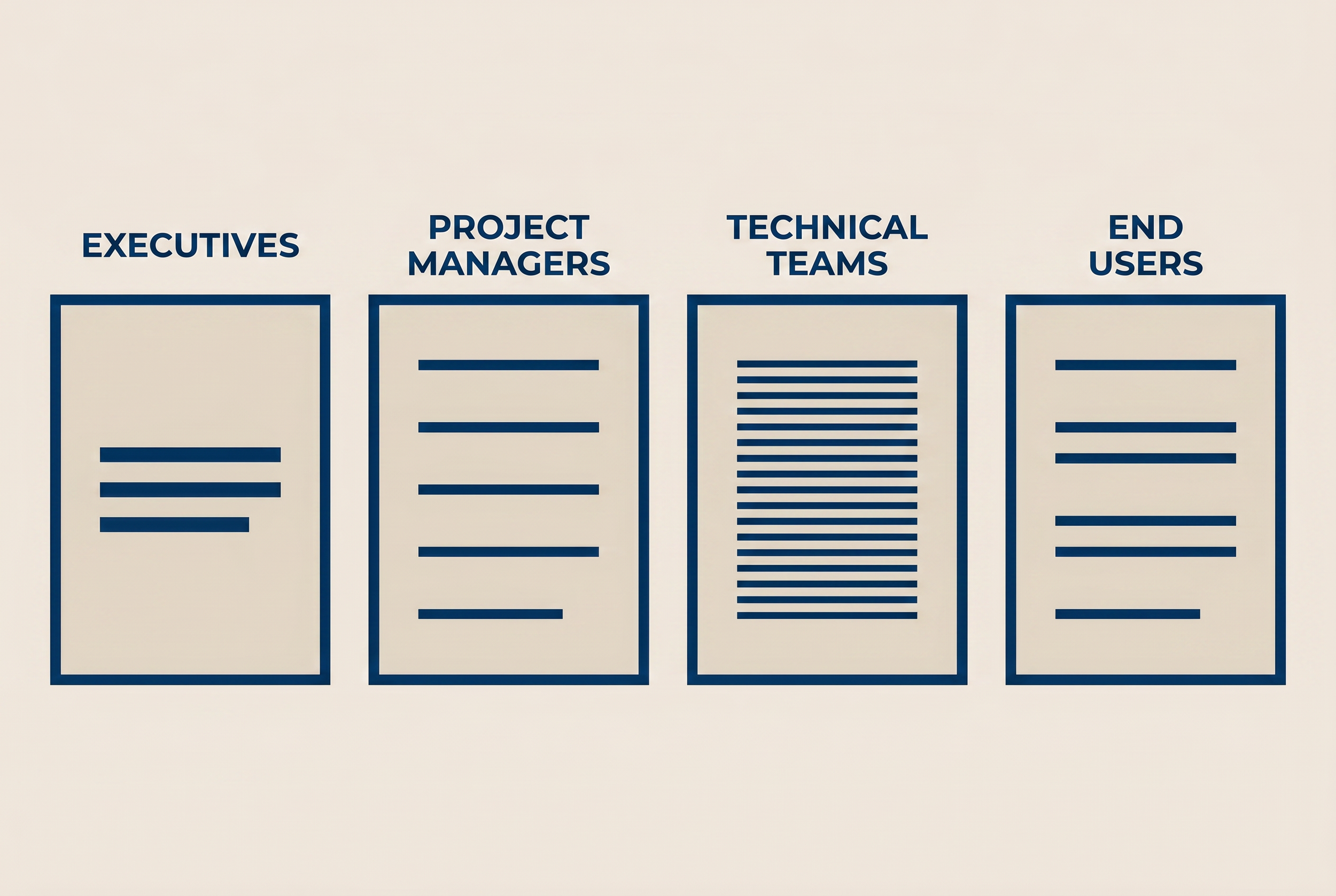

Transparency. AI governance documentation is written in plain language for four audiences: executives, project managers, technical teams, and end users.

That sentence has saved more board presentations than I can count. It is the difference between a governance program that gets funded and one that gets shelved as "too complicated to act on."

Most organizations write one document and expect four audiences to read it. The document then satisfies none of them. Executives skip it after the first three pages of NIST function definitions. Project managers cannot find the timeline. Engineers cannot find the configuration steps. End users do not know it exists.

The fix is not a longer document. The fix is four documents, each written for one reader.

Who the Four Audiences Are

Each audience has a different question. Each question has a different format.

Executives

Their question: "What does this commit us to, and what is the worst case?"

The format: a one-page summary. Three sections. What we are doing. What could go wrong. What we will do if it goes wrong. No NIST citations. No control numbers. The dollar amount of the prevented failure goes here.

A working example, abbreviated: "We are activating Agentforce for case routing. The agent will read open cases and assign them to teams. Risk: the agent could misroute a HIPAA-flagged case. Control: a human reviews every routing decision for cases tagged Confidential before assignment becomes final. Rollback: we can disable agent routing in 15 minutes and revert to the manual process."

That is 74 words. It answers the only three questions an executive needs answered.

Project Managers

Their question: "What is on my Gantt chart, who owns it, and when is it done?"

The format: a milestone document with named owners, dates, and acceptance criteria. The Six Governance Checkpoints map cleanly here. Each checkpoint is a milestone. Each has a gate criterion. The PM does not need to know what NIST function the checkpoint maps to. The PM needs to know whether Gate 4 passed.

A working example: "Gate 4, Agentic Governance Review. Owner: Architect. Due: June 14. Pass criteria: Agent Fabric guardrails configured, Session Tracing enabled, human confirmation gates set for record creation. Evidence: Agentic Governance Checklist signed off."

A PM can manage that. A PM cannot manage "deliver trustworthy AI deployment per NIST AI RMF function GOVERN."

Technical Teams

Their question: "What do I configure, in what order, and how do I know it worked?"

The format: a runbook. Setup steps in order. Configuration values. Test cases. Validation queries. Screenshots are useful. Code samples are essential. The runbook should be specific enough that an admin who has never seen the project can replicate the setup from the document alone.

The bar I use: if a CCC consultant left tomorrow, the next consultant should be able to maintain the deployment from the runbook without re-engaging the original architect.

End Users

Their question: "What do I do differently starting Monday, and what do I do if something looks wrong?"

The format: a one-page job aid. Two columns. What you used to do. What you do now. Plus a single sentence on how to escalate when the AI gives an answer that does not look right. End users do not need to know the AI exists. End users need to know what changed in their daily work.

The shortest end-user document I have ever written was four sentences. The deployment ran for two years without a user-side incident.

What Goes Wrong When You Skip This

Three failure patterns show up in the field.

The first: governance written by compliance, ignored by everyone. The document satisfies an audit. It does not change behavior. The AI deploys. The first incident reveals that nobody on the operations team knew the policy existed.

The second: governance written by engineering, rejected by executives. The document is technically rigorous. It cannot be summarized. The board asks for a one-pager. Engineering produces a longer document. The board defers the project.

The third: governance written by a vendor, owned by no one. The 47-page document I described at the top of this article. Vendor delivers it. Client signs off without reading. Six months later, an incident occurs and nobody can locate the runbook.

The pattern in all three: one document, wrong audience, no plain language.

The Template I Use

For every CCC AI governance engagement, the deliverable set includes four documents per audience. Same content, different formats.

| Document | Audience | Length | Format |

|---|---|---|---|

| Executive Summary | Board, executives, budget owners | 1 page | Three sections: what, risk, rollback |

| Project Plan | PM, scrum master, delivery owner | 5-10 pages | Milestone document with owners and gates |

| Admin Runbook | Architect, admin, integration team | 15-30 pages | Step-by-step configuration and validation |

| User Job Aid | End users in affected roles | 1 page | What changed, how to escalate |

Same governance program. Four packaging variants. Each one answers the question that audience asked.

The four documents are built once. They are reviewed quarterly. They are version-controlled in Confluence so the source of truth is clear.

The Cost of Doing This

Writing four documents instead of one looks like more work. It is not. The total page count is similar. The governance content is identical. What changes is the framing, the language, and the level of abstraction at each layer.

The first version takes about 30% longer than a single-document approach. Every version after that is faster, since the four-document template becomes a checklist.

The savings show up later. Boards approve faster. PMs deliver on schedule. Admins maintain the deployment without re-engaging the consultant. Users do not file tickets that begin "I think the system is broken..."

That last line is the one that pays for the additional 30% of writing time. End users who understand the system file fewer support tickets. Support tickets cost more than documentation.

What's Next

The next article in this series covers Accountability. When the AI is wrong, who picks up the phone? That principle is the one most often missing from the policy documents I review. It is also the one regulators ask about first.

Until then: if you have a board mandate and a 47-page governance policy that nobody can summarize, the AI Readiness Scorecard is the right starting point. It surfaces the audience-mismatch problem in 15 questions.